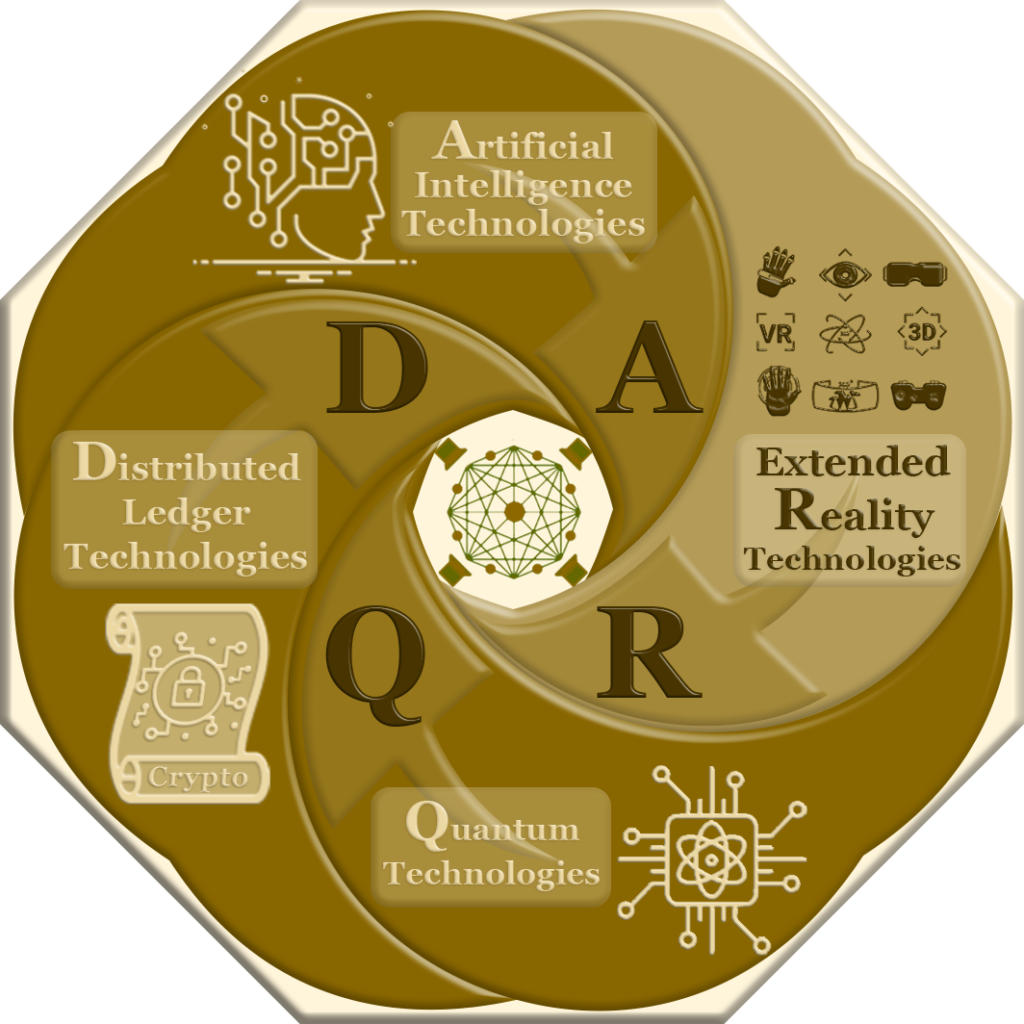

DARQ Technologies is an acronym for Distributed Ledger Technologies (DLT), Artificial Intelligence Technologies (AI), Extended Reality Technologies (XR), and Quantum Technologies (QT)[1]. These four emerging technologies individually stand at the forefront of technological advancements, and when combined, their collective potential is expected to bring about a substantial impact on multiple industries including: financial[2], health care[3], manufacturing, travel & tourism, management systems[4], information technology, and renewable energy[5].

Core Technologies

DARQ technologies represent a convergence of these four transformative technologies, offering synergies and new possibilities for innovation across various industries. Combining these technologies can unlock novel solutions, enhance security, improve efficiency, enable advanced problem-solving, and create immersive user experiences[2].

DLT Distributed Ledger Technologies

Distributed Ledger Technology (DLT) refers to a database architecture that is distributed across multiple sites, countries, or institutions.[7] It operates as a decentralized system, where data is stored across a network comprising computers, commonly referred to as nodes, rather than in a single central location, facilitating secure and transparent transactions without relying on intermediaries.[8] DLT foundation is in cryptographic algorithms, which ensure the integrity and security of the stored data.[7]

Distributed Ledger Technology (DLT) also serves as the foundation for creating digital assets, such as cryptocurrencies, digital or virtual tokens that utilize cryptographic techniques to ensure security and are built on DLT.[9] These decentralized currencies function autonomously, operating without the involvement of central banks.[8] Well-known examples include Cardano, Ethereum, Solana, Tron.

AI Artificial Intelligence Technologies

Artificial Intelligence (AI) refers to the development of computer systems that can perform tasks that typically require human intelligence, such as learning, problem-solving, and decision-making. AI is a broad field that encompasses many different techniques and approaches, including machine learning, natural language processing, computer vision, and robotics.

The large language model (LLM), a deep learning model that possesses an extensive set of parameters, started to emerge around 2018 and since then have been used in a variety of fields, including natural language processing, healthcare[10][11], chemistry[12], academic research[13], and software development [14]. These models are trained using unsupervised methods on vast amounts of textual data[15].

ER Extended Reality Technologies

Extended Reality (XR) is a term used to describe a range of technologies that offer immersive and interactive experiences beyond what is possible with traditional screens or interfaces. These technologies include Virtual Reality (VR), Augmented Reality (AR), Mixed Reality (MR), and other related technologies.

One prominent application of XR is the metaverse which refers to a collective virtual shared space where users can interact with each other and digital objects in real-time, creating a new form of interconnected reality. It can be thought of as a massive, persistent, and dynamic virtual universe that exists parallel to the physical world[16].

QT Quantum Technologies

Quantum Technology is a type of technology that is based on the principles of [[Quantum mechanics]], which describes the behavior of matter and energy at the atomic and subatomic level. It leverages the unique properties of quantum systems, such as superposition and entanglement, to perform complex calculations and operations that are beyond the capabilities of classical computers. Honeywell estimates that the value of quantum technology could reach a staggering $1 trillion within the next three decades. This highlights the immense potential for researchers and investors and scientific and economic significance of this cutting-edge field[17]. Scheidsteger et al.[18] provide a broad categorization for QT 2.0 dividing them into four fields. It is important to note that while these fields exhibit significant overlaps, they do not encompass all potential quantum technologies[19].

Quantum information science (Q INFO)

Quantum information science (Q INFO) serves as the foundational theory for QT 2.0. Its focus lies in key concepts such as the superposition of states and entanglement, which refers to the non-local correlation of quantum particles. A fundamental requirement for quantum information technology is the concept of a qubit, or quantum bit, which represents the quantum mechanical extension of a classical bit.

Quantum metrology, sensing, imaging, and control (Q METR)

Quantum metrology and sensing presents groundbreaking measurement techniques that surpass the precision of classical frameworks. Through the utilization of quantum entanglement’s sensitivity to disturbances, a remarkable advancement in accuracy has been achieved by the latest generation of quantum logic clocks. Moreover, quantum tomography, a mathematical approach, enables the reconstruction of quantum states by employing a comprehensive set of measurements. This technique opens up new possibilities for accurately capturing and understanding quantum phenomena.

Quantum communication and cryptography (Q COMM)

Quantum cryptography and quantum key distribution are two emerging technologies that are expected to play a critical role in the coming future to mitigate vulnerabilities of traditional cryptographic methods in the face of quantum algorithms[20]

Quantum computation (Q COMP)

Quantum computation harnesses the power of superposition and entanglement in arrays of qubits to enable data processing and calculations that are beyond the capabilities of classical computers. By leveraging these quantum phenomena, quantum computing opens up new possibilities for advanced computational tasks

DARQ Technologies Combined

DLT AI

The convergence of Distributed Ledger Technology (DLT) and Artificial Intelligence (AI) is an area of active research and development. The combination of these two technologies has the potential to create new applications and services that are more secure, transparent, and efficient than traditional systems. DLT is impacted by AI focusing on AI-based consensus algorithms, smart contract security, decentralized coordination, DLT fairness, non-fungible tokens (NFT), decentralized finance, decentralized autonomous organizations (DAOs), and more[21].

DLT ER

Companies and academic researchers have successfully integrated DLT and ER by leveraging the advantages offered by each technology. The combination of these technologies has opened up new opportunities and improved user experiences. VR and AR are added to Blockchain-based solutions as enabling technologies, enhancing the way users interact with digital content through natural interfaces like gaze and gestures. This integration allows for the creation of new and enriched experiences, such as virtual stores and immersive events. Additionally, the psychological effects of interacting with content in interactive, 3D environments are leveraged to enhance the effectiveness of experiences, particularly in education and training domains

Quantum DLT

Called coarse-grained boson-sampling (CGBS) is a variant that can be used as a quantum Proof-of-Work (PoW) scheme for blockchain consensus. The users perform boson-sampling using input states that depend on the current block information and commit their samples to the network. Afterward, CGBS strategies are determined which can be used to verify the samples and reach consensus. Using this approach, quantum computers could be used to verify transactions on network such as Bitcoin or future, more efficient ones, more energy-efficiently reducing the electricity use by up to 90%.[22]

Quantum Internet

The quantum internet refers to a proposed system that uses quantum mechanical principles to create a new way of transmitting and manipulating information in decentralized network. The primary distinction between the quantum internet and the classical internet is the nature of the data being transmitted: quantum bits (qubits) instead of classical bits.

Here are some key features and aspects of the quantum internet:

Quantum Key Distribution (QKD): One of the most well-known applications of quantum communication is QKD, which allows two parties to generate a shared, secret random key. The security of this key is guaranteed by the fundamental laws of quantum mechanics, ensuring that eavesdropping can be detected.

Quantum Entanglement: A core feature of the quantum internet is the use of entangled particles, usually photons, to transmit information. When particles are entangled, the state of one particle is dependent on the state of another, regardless of the distance separating them.

Teleportation: Not as sci-fi as it sounds, quantum teleportation is a process by which the state of a quantum system can be transmitted from one location to another with the help of two entangled particles and the transmission of classical information. This doesn’t mean teleporting matter, but rather the information associated with a quantum state.

Quantum Repeaters: Over long distances, quantum signals decay and lose their coherence. Quantum repeaters are proposed devices that help maintain and boost these signals, allowing quantum information to be transmitted over long distances without degradation.

End-to-End Encryption: While the classical internet can also implement end-to-end encryption, the quantum internet could offer encryption that’s theoretically unbreakable, thanks to the principles of quantum mechanics.

Integration with Classical Internet: A quantum internet will not replace the classical internet. Instead, they will likely work in tandem, with the quantum internet handling tasks that require quantum resources, and the classical internet handling the vast majority of data transmission and processing as it does today.

Applications Beyond Communication: Besides secure communication, a quantum internet could enable a network of quantum computers, allowing them to work together and solve problems that are currently too complex for classical computers. Additionally, it might enable more accurate time-keeping, improved GPS systems, and more advanced forms of distributed quantum computing.

Quantum AI

A Quantum Language Model (QLM) is a language model built using the principles of Quantum Probability Theory, which is a mathematical framework that describes the behavior of quantum systems. The QLM is a stochastic model that can take advantage of quantum correlations due to interference and entanglement. It is a new approach for building language models that has been explored in recent years by the Natural Language Processing (NLP) community. The QLM is a proof-of-concept study that aims to show the potential of this approach rather than building a complete application for solving language modeling problems for any setting[23].

Quantum natural language processing (QNLP) is the application of quantum computing to natural language processing (NLP) that takes the phenomenon of superposition, entanglement, interference to run NLP models or language related tasks on the hardware. It computes word embeddings as parameterised quantum circuits that can solve NLP tasks faster than any classical computer[24].

Quantum Many-body Wave Function (QMWF) inspired language modeling approach to address the limitations of existing quantum-inspired language models (QLMs) in modeling the interaction among words with multiple meanings and integrating with neural networks[25].

DARQ Technologies as IT evolution

DARQ technologies magnified Information Technologies (IT) evolution in relation to the Convergence Time (CT). These cutting-edge IT advancements have engendered an acceleration in the convergence of multifarious technological paradigms. The seamless integration of DARQ technologies with the IT ecosystem has instigated a paradigm shift in industries and societies, yielding transformative metamorphoses in the societal fabric. This complementary amalgamation has accelerated the development and implementation of innovative solutions, endowing society with augmented efficiency, balanced automation, and decision-making capabilities across diverse domains and has the potential to shape a better future by addressing global challenges, empowering individuals, and driving economic growth. As described by Klaus Schwab in his book “The Fourth Industrial Revolution” (4 IR), by adopting a human-centered approach and fostering collaboration, we can harness technology to create positive change and improve the state of the world[26].

Information Technologies Convergence

| Year | Event | Timeline | Era | Acronym |

|---|---|---|---|---|

| 1950 | Turing Test | Mainframe | Web 1.0 | |

| 1964 | System 360 | Mainframe | Web 1.0 | |

| 1964 | Server/Host | CS & PC | Web 1.0 | |

| 1969 | ARPANET | Internet | Web 1.0 | |

| 1972 | SAP | CS & PC | Web 1.0 | |

| 1977 | PC | CS & PC | Web 1.0 | |

| 1990 | System 390 | Mainframe | Web 1.0 | |

| 1991 | Public Internet | Internet | Web 1.0 | |

| 1994 | Amazon | Internet | Web 1.0 | |

| 1997 | Big Data | Big Data | Web 2.0 | |

| 1999 | saleforce.com | Social | Web 2.0 | SMAC |

| 1999 | IoT, M2M | IoT | Web 3.0 | |

| 2005 | Web 2.0 start | Social, Media, Cloud | Web 2.0 | SMAC |

| 2006 | AWS | Social, Media, Cloud | Web 2.0 | SMAC |

| 2007 | IBM: Deep Blue | Artificial Intelligence | Web 3.0 | DARQ |

| 2008 | iPhone | Social, Media, Cloud | Web 2.0 | SMAC |

| 2008 | Bitcoin Whitepaper | DLT | Web 3.0 | DARQ |

| 2010 | PC sales peak | CS & PC | Web 1.0 | |

| 2010 | Self-driving cars | IoT | Web 3.0 | |

| 2010 | Oculus Rift | External Reality | Web 3.0 | DARQ |

| 2011 | D-Wave | Quantum Technology | Web 3.0 | DARQ |

| 2014 | IDC: 4.4 ZB | Big Data | Web 2.0 | |

| 2017 | 4 IR | IoT | Web 3.0 | |

| 2019 | Metaverse | External Reality | Web 3.0 | DARQ |

| 2020 | Quantum Supremacy | Quantum Technology | Web 3.0 | DARQ |

Web 1.0

Web 1.0 refers to the early stage of the World Wide Web, characterized by static web pages and limited interactivity. It was the first iteration of the web that emerged in the early 1990s and lasted until around the early 2000s.

Mainframe

The Turing Test is a test proposed by the mathematician and computer scientist Alan Turing in 1950 as a way to measure a machine’s ability to exhibit intelligent behavior that is indistinguishable from that of a human. It involves a human evaluator engaging in a conversation with both a machine and another human without knowing which is which. If the evaluator cannot consistently determine which is the machine based on their responses, the machine is said to have passed the Turing Test.

A mainframe computer is a powerful and large computer used by large organizations for critical tasks. It has more processing power than other computers but is not as big as a supercomputer. Mainframes originated in the 1960s and are often used as servers. They played a crucial role in supporting the infrastructure and hosting the websites of that era[27].

System/360 (1964) and System/390 (1990) are computer systems developed by IBM. System/360 was a family of compatible computers that introduced advancements in compatibility, scalability, and software compatibility. It marked a significant milestone in computing history. System/390, an evolution of System/360, retained compatibility while introducing new features and enhancements. It focused on improving performance, reliability, and scalability for enterprise computing applications. Both systems played crucial roles in mainframe computing, influencing large-scale data processing and business computing.

Client-Server & PC

The client-server model is a distributed application structure where tasks are divided between servers and clients, allowing communication over a network, with examples including email, network printing, and the World Wide Web[28]. It dates back at least to 1964, where it run a job and responded with the output for OS/360 as a remote job entry architecture.

In June 1972, SAP was founded as a private partnership. They developed a real-time system for payroll and accounting, storing data locally instead of on punch cards. This became their flagship product, later expanding to serve other clients.

A personal computer (PC) is a user-friendly, affordable microcomputer designed for individual use, which has had a significant impact on people’s lives globally during the Digital Revolution[29]. The Altair 8800, introduced in 1974 by MITS and powered by the Intel 8080 Microprocessor, is widely regarded as the first true personal computer and a catalyst for the microcomputer revolution[30]. A few years later, in 1977, three companies successfully deployed mass-marketed personal computers later known as the “1977 trinity”: Commodore PET, Apple II, and TRS-80 which marked the arrival of mass-market, pre-assembled computers, expanding computer usage to a broader audience and shifting the focus towards software applications rather than processor hardware development.

The PC market experienced continuous growth and expansion until 2010, reaching its peak in shipments in 2012. However, on January 27, 2010, Apple introduced the iPad, a groundbreaking device that revolutionized web browsing, email communication, multimedia consumption, gaming, e-books, and more. This marked a significant shift in the way people engage with technology.

Internet

The Internet has its beginning as early as the mainframes. It was developed in 1960s by Advanced Research Projects Agency (ARPA) with the purpose to develop time-sharing of computers[31]. In 1969, Advanced Research Projects Agency Network (ARPANET) connected first computer nodes, starting what we know now as the Internet[32]. Gradually, the ARPANET transformed into a decentralized network, linking various remote centers and military bases across the United States[33]. Soon after, in 1972, came public data networks, communication networks accessible to the general public, enabling the transmission of data between different locations or users. It is operated by a telecommunications company and allows for the exchange of information over long distances.

In the mid-1980s, the expansion of the internet presented significant economic opportunities for commercial involvement and service delivery to the public. MCI Mail and Compuserve connected to the internet in 1989, providing email and public access services to hundreds of thousands of users. Shortly after, on January 1, 1990, PSInet launched an additional internet backbone for commercial use, contributing to the development of the commercial internet in the years to come[34].

Amazon.com, Inc., founded on July 5, 1994, by Jeff Bezos, is a prominent American multinational technology company known for its involvement in e-commerce, cloud computing, online advertising, digital streaming, and artificial intelligence[35].

Web 2.0

Web 2.0, also referred to as the participative or social web, encompasses websites that prioritize user-generated content, user-friendly interfaces, participatory culture, and interoperability, allowing compatibility with other products, systems, and devices for end users[36]. Coined in 1999 and popularized at the first Web 2.0 Conference in 2004, the term “Web 2.0” represents a general shift towards interactive websites that surpassed the static nature of the original Web, rather than indicating a formal change in the World Wide Web itself.

Salesforce, Inc. is an American company founded in 1999 that provides cloud computing services. Salesforce played a significant role in the Web 2.0 era by leveraging cloud computing and providing innovative software-as-a-service (SaaS) solutions. As one of the pioneers in delivering business applications through the internet, Salesforce helped shape the concept of cloud-based platforms and collaborative software tools. By offering a user-friendly and interactive interface, Salesforce empowered businesses to harness the potential of Web 2.0 technologies for customer relationship management (CRM) and sales automation.

Big Data

Big Data refers to large and complex datasets that cannot be easily handled by traditional data-processing software, often characterized by a high number of entries and attributes. It encompasses a vast amount of information that surpasses our comprehension when analyzed in smaller quantities[37].

In its 2013 predictions, IDC projected 4.4 zettabytes of data to be created or replicated that year, marking a 50% growth compared to 2012. IDC also noted that only a small fraction of the digital universe was being explored for analytic value[38].

SMAC: Social, Mobile, Analytics, Cloud

In March 2006, Amazon Web Services (AWS) introduced Amazon S3 cloud storage, followed by the launch of EC2 in August 2006. At that time, Andy Jassy, the founder and vice president of AWS, emphasized how Amazon S3 alleviated concerns for developers regarding data storage, security, availability, server maintenance costs, and storage capacity.

The original iPhone, also known as iPhone 2G or iPhone 1, was the first smartphone developed and marketed by Apple Inc. Released in the United States on June 29, 2007, the iPhone revolutionized the mobile phone industry by introducing a touch interface, minimal hardware buttons, and continuous internet access. It became Apple’s most successful product, paving the way for the App Store and transforming the smartphone industry[39].

The public cloud started gaining significant traction and becoming mainstream around the early to mid-2010s with the increasing adoption and maturity of cloud computing technologies in recent years. As organizations recognized the benefits of scalability, cost-effectiveness, and flexibility offered by public cloud services, they started migrating their applications and data to public cloud platforms. The widespread availability of reliable and secure cloud infrastructure, along with advancements in cloud management and security tools, played a significant role in driving the mainstream adoption of public cloud services. Today, public cloud has become a popular choice for businesses of all sizes, enabling them to leverage the power of cloud computing for their operations and innovation.

Web 3.0

IoT and Smart Machines

The Internet of Things (IoT) refers to physical objects equipped with sensors, processing capabilities, and software that can connect and exchange data with other devices and systems over the Internet or other communication networks. The concept of the Internet of Things (IoT) emerged in the early 1980s, with the first connected appliance being a vending machine at Carnegie Mellon University. Mark Weiser’s 1991 paper and conferences like UbiComp contributed to the development of the IoT vision. In 1994, Reza Raji described it as automating various devices. Companies like Microsoft proposed solutions, and Bill Joy discussed device-to-device communication in 1999.

A self-driving car, also called an autonomous car or robo-car, is a vehicle that can operate without human input. Self-driving cars are sophisticated smart machines that combine advanced technologies such as artificial intelligence, computer vision, and sensor systems to navigate and operate on roads autonomously. These vehicles can perceive their surroundings, interpret and analyze the data, make informed decisions, and safely navigate through various traffic situations without the need for human intervention. With the ability to learn and adapt from their experiences, self-driving cars represent a remarkable fusion of cutting-edge technology and automotive engineering, aiming to revolutionize transportation by improving safety, efficiency, and convenience on the roads.

DLT: Distributed Ledger Technology Web 3.0

The Bitcoin whitepaper is a document titled “Bitcoin: A Peer-to-Peer Electronic Cash System” written by an individual or group using the pseudonym Satoshi Nakamoto. It was published in 2008 and serves as the foundational blueprint for the Bitcoin cryptocurrency. The whitepaper outlines the key concepts and mechanisms behind Bitcoin, including its decentralized nature, the use of blockchain technology for maintaining a public ledger, and the process of mining to secure the network and validate transactions. It introduces the concept of a digital currency that operates without the need for intermediaries such as banks and provides a solution for the double-spending problem. The Bitcoin whitepaper has had a significant impact on the development and adoption of cryptocurrencies worldwide.

AI: Artificial Intelligence

Deep Blue, an IBM supercomputer, made history in the field of artificial intelligence. It was the first computer to defeat a reigning world chess champion, Garry Kasparov, in a match. The development began in 1985 at Carnegie Mellon University and later moved to IBM, undergoing name changes along the way. In 1996, Deep Blue lost to Kasparov but was upgraded and won a rematch in 1997. Its victory is a notable milestone in AI history and has been featured in various media.

ER: Extended Reality

In the late 1980s, Jaron Lanier, a prominent figure in the field, popularized the term “virtual reality.” During the 1990s, consumer headsets for virtual reality began to be commercially released, with predictions of affordable VR becoming available by 1994, as forecasted by Computer Gaming World in 1992.

Oculus Rift, developed by Oculus VR, revived the virtual reality industry by providing an affordable and high-quality VR experience. It was prototyped in 2011 and launched with the DK1 in 2013 after successful Kickstarted campaign. The Rift went through several models before the consumer release of the Rift CV1 in 2016. It was later replaced by the Oculus Rift S in 2019. The Oculus Rift software is compatible with its successor, the Oculus Quest.

The “metaverse” is a concept from science fiction that envisions a virtual world created through VR and AR technologies, serving as a universal and immersive iteration of the Internet. In everyday language, the metaverse refers to a network of 3D virtual worlds focused on social and economic connections. In 2019, Facebook introduced Facebook Horizon, a social virtual reality (VR) world,. and in August 2021, Facebook launched Horizon Workrooms, an open beta collaboration app designed for remote work. It provides virtual meeting rooms, whiteboards, and video call integration, accommodating up to 50 participants.

QT: Quantum Technology 2.0

D-Wave Quantum Systems Inc. is a Canadian company known for being the first to sell quantum computers that harness quantum effects. They have developed quantum computers with increasing numbers of qubits, with their latest system featuring 5,000 qubits. While their current computers specialize in quantum annealing, they have also announced plans to work on universal gate-based quantum computers in the future. In May 2011, D-Wave Systems unveiled the D-Wave One, the world’s first commercially available quantum computer. It operated on a 128-qubit chipset and utilized quantum annealing to solve optimization problems. Earlier prototypes, such as the 16-qubit Orion Quantum Computer, were demonstrated in 2007. D-Wave also showcased a 28-qubit quantum annealing processor fabricated at NASA’s Jet Propulsion Laboratory Microdevices Lab.

Quantum supremacy is when a quantum computer can solve a problem that even the best classical computers cannot solve within a reasonable timeframe. It demonstrates the superior computational power of quantum computers compared to classical computers. In December 2020, researchers from the University of Science and Technology of China achieved quantum supremacy using their photonic quantum computer Jiuzhang. They implemented gaussian boson sampling with 76 photons, generating results that would take a classical supercomputer 2.5 billion years to compute in just 200 seconds[40].

References

- ^ “The post-digital era is upon us: Are you ready for what’s next” (PDF). Accenture Technology Vision 2019. Retrieved February 22, 2021.

- ^ Jump up to:a b Gigante, G, Zago, A (2023). “DARQ technologies in the financial sector: artificial intelligence applications in personalized banking”. Qualitative Research in Financial Markets. 15 (1): 29–57. doi:10.1108/QRFM-02-2021-0025. S2CID 248584255.

- ^ Miliard, M. (2021). “Accenture has a DARQ vision of healthcare’s ‘post-digital’ era”. Healthcare IT News.

- ^ Kisielnicki, J.; Zadrożny, J. (2021). “DARQ technology as a digital transformation strategy in terms of global crises”. Journal Name. 19 (3 (93)): 150–167.

- ^ Manoharan, R. (2021). “The Applied Energy Systems Enacting the Ever Green Energy for our planet: Confluence of DARQ Technologies”. SPAST Abstracts. 1 (1).

- ^ Alt, Rainer (2021). “Electronic Markets on the next convergence”. Electronic Markets. 31 (1): 1–9. doi:10.1007/s12525-021-00471-6. ISSN 1019-6781. S2CID 255576110.

- ^ Jump up to:a b Gourisetti, Sri Nikhil Gupta; Cali, Ümit; et al. (2021). “Standardization of the Distributed Ledger Technology cybersecurity stack for power and energy applications”. Sustainable Energy, Grids and Networks. 28: 100553. doi:10.1016/j.segan.2021.100553. ISSN 2352-4677. S2CID 240251135.

- ^ Jump up to:a b Silva, E.C.; da Silva, M.M. (2022). “Research contributions and challenges in DLT-based cryptocurrency regulation: a systematic mapping study”. Journal of Banking and Financial Technology. 6: 63–82. doi:10.1007/s42786-021-00037-2. S2CID 257161940.

- ^ Ferreira, A.; Sandner, P. G.; Dünser, T. (2021). “Cryptocurrencies, DLT and Crypto Assets – the Road to Regulatory Recognition in Europe”. Forthcoming in: Handbook on Blockchain. doi:10.2139/ssrn.3891401. S2CID 236425817.

- ^ Sallam, M. (2023). “ChatGPT Utility in Healthcare Education, Research, and Practice: Systematic Review on the Promising Perspectives and Valid Concerns”. Healthcare. 11 (6): 887. doi:10.3390/healthcare11060887. PMC 10048148. PMID 36981544.

- ^ Cascella, M.; Montomoli, J.; Bellini, V. (2023). “Evaluating the Feasibility of ChatGPT in Healthcare: An Analysis of Multiple Clinical and Research Scenarios”. J Med Syst. 47 (33): 33. doi:10.1007/s10916-023-01925-4. PMC 9985086. PMID 36869927.

- ^ White, A. D. (2023). “The future of chemistry is language”. Nature Reviews Chemistry. 7 (7): 457–458. doi:10.1038/s41570-023-00502-0. PMID 37208543. S2CID 258808161.

- ^ Rahman, Md. Mizanur; Terano, Harold Jan; Rahman, Md Nafizur; Salamzadeh, Aidin; Rahaman, Md. Saidur (2023). “ChatGPT and Academic Research: A Review and Recommendations Based on Practical Examples”. Journal of Education, Management and Development Studies. 3 (1): 1–12. doi:10.52631/jemds.v3i1.175. S2CID 257845986.

- ^ Ross, Steven I.; Martinez, Fernando; Houde, Stephanie; Muller, Michael; Weisz, Justin D. (2023). “The programmer’s assistant: Conversational interaction with a large language model for software development”. ACM Conference on Intelligent User Interfaces (IUI).

- ^ Birhane, A.; Kasirzadeh, A.; Leslie, D.; Wachter, S. (2023). “Science in the age of large language models”. Nat. Rev. Phys. 5 (5): 277–280. doi:10.1038/s42254-023-00581-4. S2CID 258361324.

- ^ Ritterbusch, Georg David; Teichmann, Malte Rolf (2023). “Defining the Metaverse: A Systematic Literature Review”. IEEE Access. 11: 12368–12377. doi:10.1109/ACCESS.2023.3241809. ISSN 2169-3536. S2CID 256562095.

- ^ “Quantum 2.0 technology: the revolution starts in the December 2021 edition of Physics World”. Physics World. 2021-12-01. Retrieved 2023-06-20.

- ^ Scheidsteger, Thomas; Haunschild, Robin; Bornmann, Lutz; Ettl, Christoph (2021-09-15). “Bibliometric Analysis in the Field of Quantum Technology”. Quantum Reports. 3 (3): 549–575. doi:10.3390/quantum3030036. ISSN 2624-960X.

- ^ Scheidsteger, Thomas; Haunschild, Robin; Ettl, Christoph (2022-11-24). “Historical Roots and Seminal Papers of Quantum Technology 2.0”. NanoEthics. 16 (3): 271–296. doi:10.1007/s11569-022-00424-z. ISSN 1871-4757. S2CID 253965428.

- ^ Ahn, Jongmin, et al. (19 January 2022). “Toward Quantum Secured Distributed Energy Resources: Adoption of Post-Quantum Cryptography (PQC) and Quantum Key Distribution (QKD)”. Energies. 15 (3): 714. doi:10.3390/en15030714.

- ^ Bellagarda, J. S.; Abu-Mahfouz, A. M. (2022). “An updated survey on the convergence of distributed ledger technology and artificial intelligence: Current state major challenges and future direction”. IEEE Access. 10: 50774–50793. doi:10.1109/ACCESS.2022.3173297. S2CID 248684391.

- ^ Deepesh, Singh (31 May 2023). “Proof-of-work consensus by quantum sampling”. arXiv:2305.19865 [quant-ph].

- ^ Basile, Ivano; Tamburini, Fabio (September 7–11, 2017). “Towards Quantum Language Models”. Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing. Copenhagen, Denmark. pp. 1840–1849.

- ^ Ganguly, S.; Morapakula, S.N.; Coronado, L.M.P. (2022). “Quantum natural language processing based sentiment analysis using lambeq toolkit”. 2022 Second International Conference on Power, Control and Computing Technologies (ICPC2T). pp. 1–6.

- ^ Zhang, Peng; Su, Zhan; Zhang, Lipeng; uWang, Benyo; Song, Dawei (Oct 2018). “A quantum many-body wavefunction inspired language modeling approach”. Proceedings of the 27th ACM International Conference on Information and Knowledge Management.

- ^ Schwab, Klaus (2017-01-03). The Fourth Industrial Revolution. Crown. ISBN 978-1-5247-5886-8.

- ^ “Computer Terminology – Computer Types”. www.unm.edu. Retrieved 2023-06-21.

- ^ “Original PDF”. dx.doi.org. doi:10.15438/rr.5.1.7. Retrieved 2023-06-21.

- ^ “Personal computer Definition & Meaning”. Dictionary.com. Retrieved 2023-06-21.

- ^ Dorf, Richard C. (2003-11-24). CRC Handbook of Engineering Tables. doi:10.1201/9780203009222. ISBN 9780429214356.

- ^ “Origins of the Internet”. 2011-09-03. Archived from the original on 2011-09-03. Retrieved 2023-06-21.

- ^ “History of the Internet & World Wide Web : 1) Internet Before Web”. www.netvalley.com. Retrieved 2023-06-21.

- ^ Townsend, Anthony M (2001). “The Internet and the Rise of the New Network Cities, 1969–1999”. Environment and Planning B: Planning and Design. 28 (1): 39–58. doi:10.1068/b2688. ISSN 0265-8135. S2CID 11574572.

- ^ “InfoWorld – Google Books”. web.archive.org. 2018-12-05. Retrieved 2023-06-21.

- ^ “Inline XBRL Viewer”. www.sec.gov. Retrieved 2023-06-21.

- ^ Blank, Grant; Reisdorf, Bianca C. (May 2012). “THE PARTICIPATORY WEB: A user perspective on Web 2.0”. Information, Communication & Society. 15 (4): 537–554. doi:10.1080/1369118X.2012.665935. ISSN 1369-118X. S2CID 143357345.

- ^ Mahdavi Damghani, B. (2019). Data-driven models & mathematical finance: apposition or opposition? (Thesis). University of Oxford.

- ^ “Navigating the Digital Universe with 3D Graphics | SAP Blogs”. blogs.sap.com. Retrieved 2023-06-21.

- ^ Kelly, Heather (2017-06-29). “10 years later: The industry that the iPhone createda”. CNNMoney. Retrieved 2023-06-21.

- ^ Zhong, Han-Sen; Wang, Hui; Deng, Yu-Hao; Chen, Ming-Cheng; Peng, Li-Chao; Luo, Yi-Han; Qin, Jian; Wu, Dian; Ding, Xing; Hu, Yi; Hu, Peng; Yang, Xiao-Yan; Zhang, Wei-Jun; Li, Hao; Li, Yuxuan (2020-12-18). “Quantum computational advantage using photons”. Science. 370 (6523): 1460–1463. doi:10.1126/science.abe8770. ISSN 0036-8075. PMID 33273064. S2CID 227254333.